-

Chris Alarcon

Chris Alarcon - 03 May, 2026

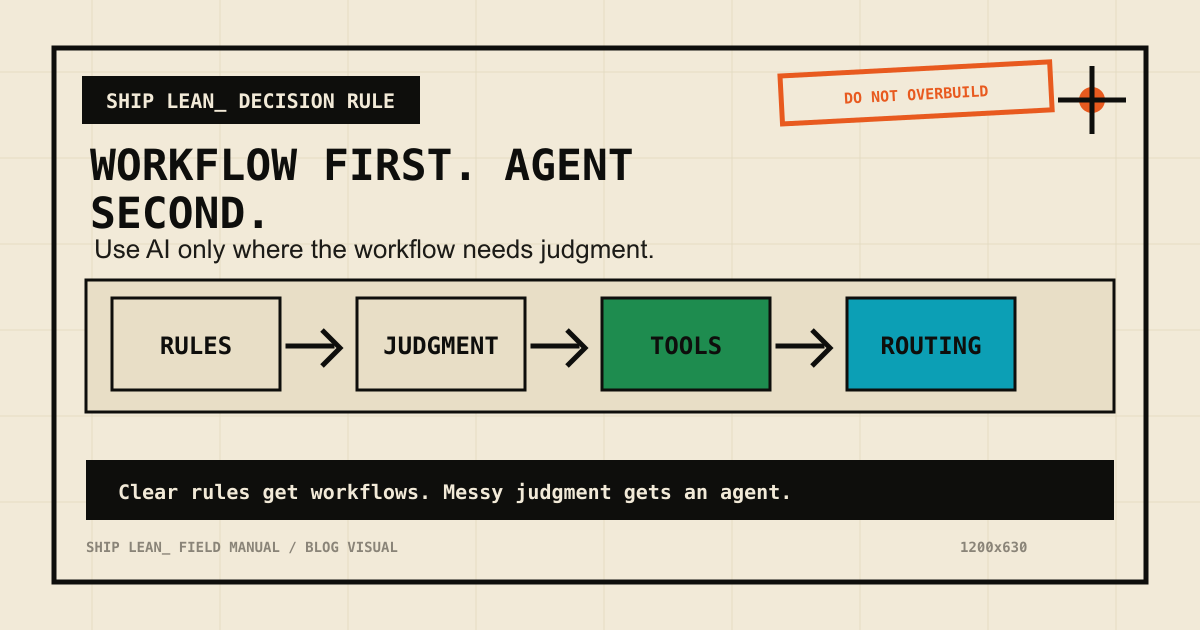

AI Coding Agent vs Workflow Automation

Quick answer: An AI coding agent builds and changes the system. Workflow automation runs the system. If you mix those jobs up, you either get a fragile script pretending to be operations or a giant canvas pretending to be a developer. For Ship Lean, the clean split is:Claude Code or Codex builds. n8n runs. Human approves.That rule is the center of the n8n AI Agents hub. The Actual DifferenceLayer AI coding agent Workflow automationPrimary job Build, edit, reason, test Trigger, route, retry, logBest context Repo files, docs, diffs, terminal output App data, schedules, webhooks, credentialsOutput Code, content, config, PR-ready changes Runs, records, notifications, approvalsFailure mode Bad edit or bad assumption Broken credential, bad input, failed nodeBest tools Codex, Claude Code, Cursor n8n, Make, ZapierAn AI coding agent is closer to a builder. Workflow automation is closer to an operations layer. Why This Matters for Organic Traffic Modern SEO is not "write 50 posts and hope." The better system is:Pull real demand signals from Search Console. Identify pages Google is already testing. Refresh the page with clearer answers, schema, internal links, and proof. Build a tool, workflow, or comparison page when the query deserves it. Route the work through human approval. Measure again.That system needs both layers. n8n can pull the data and create the weekly queue. Codex can read the page, update the repo, run the build, and verify the result. A human still approves the strategic claim. When to Use an AI Coding Agent Use an AI coding agent when the task asks for judgment across files:update title and description without breaking the site add FAQ schema through the existing content system compare two local pages and avoid duplication build a small tool or calculator fix a failed build turn a strategy doc into site changesThis is not just "generate text." It is editing inside a real system. When to Use Workflow Automation Use workflow automation when the task needs to happen on a trigger:every week, pull GSC data when a new page ships, add it to a promotion queue when a task is approved, send the next notification when a workflow fails, alert the owner when a form arrives, enrich and route itThis is not just "connect apps." It is making the repeatable parts visible and reliable. The Mistake: Making One Tool Do Both Jobs Bad setup:Mistake What happensPut all strategy and writing inside n8n prompts Hard to version, review, test, and improveUse a coding agent as a permanent scheduler Weak run history, weak credential handling, fragile recurrenceLet automation publish directly Fast mistakes with public consequencesAdd agents to every workflow Higher cost, slower runs, harder debuggingThe point is not to be maximalist. The point is to give each tool the job it can do cleanly. The Ship Lean Pattern For a solo builder, the working pattern looks like this:Stage Owner ExampleSignal n8n Pull Search Console and analytics dataJudgment Codex or Claude Code Decide whether to refresh, build, or ignoreBuild Codex or Claude Code Edit content, code, schema, and linksApproval Human Confirm voice, risk, and business priorityDistribution n8n Route to GitHub, newsletter, social, or communityThat is how you turn AEO from a vague idea into a weekly operating system. Simple Decision Rule Ask: "Does this need project context or a repeatable trigger?" If it needs project context, use an AI coding agent. If it needs a repeatable trigger, use workflow automation. If it needs both, connect them and add human approval before anything public ships. Next, compare the two concrete tools: Codex vs n8n. If your workflow needs an agent step, read the n8n AI Agent Tutorial.